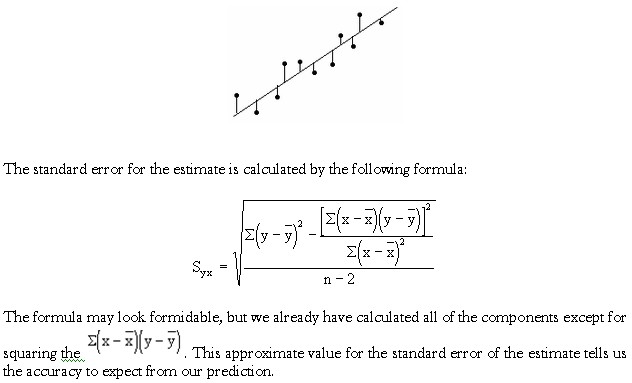

MSE provides an unbiased estimator of σ 2. Thus, the mean square error is computed by dividing SSE by n – 2. Statisticians have shown that SSE has n – 2 degrees of freedom because two parameters (β 0 and β 1) must be estimated to compute SSE. The mean square error (MSE) provides the estimate of σ 2 it is SSE divided by its degrees of freedom.Įvery sum of squares has associated with it a number called its degrees of freedom. Thus, SSE, the sum of squared residuals, is a measure of the variability of the actual observations about the estimated regression line. Recall that the deviations of the y values about the estimated regression line are called residuals. Estimate of σ 2įrom the regression model and its assumptions we can conclude that σ 2, the variance of e, also represents the variance of the y values about the regression line. Both require an estimate of σ 2, the variance of e in the regression model. Thus, to test for a significant regression relationship, we must conduct a hypothesis test to determine whether the value of β 1 is zero.

Alternatively, if the value of β 1 is not equal to zero, we would conclude that the two variables are related. In this case, the mean value of y does not depend on the value of x and hence we would conclude that x and y are not linearly related. If the value of β 1 is zero, E(y) = β 0 + (0)x = b 0. In a simple linear regression equation, the mean or expected value of y is a linear function of x: E(y) = β 0 + β 1x.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed